Personalized Documentary Recommendations That Break Your Algorithm

Let’s be honest. The explosion of streaming means you can access more documentaries than any previous generation—yet somehow, “What should I watch next?” still haunts your evenings like an unsolved mystery. Despite personalized recommendations for documentaries, decision fatigue is at an all-time high, and even AI-powered movie assistants can feel like they’re reading tarot cards, not your mind. Too often, you’re lured by glossy thumbnails, only to bail midway through a supposedly “top pick.” If you’ve ever felt stuck in an endless scroll, caught between FOMO and boredom, you’re not alone. This isn’t just an inconvenience; it’s a modern cultural problem. The right doc can spark a worldview shift or light up the group chat—so why does it feel so hard to find that perfect fit? This investigative deep-dive hacks through the hype, exposes the flaws in current recommendation engines, and hands you actionable strategies to reclaim your documentary destiny. Forget the generic “top ten” list: you’re about to learn how to outsmart the algorithm and build a viewing queue you’ll actually care about.

The paradox of choice: why endless options make us numb

Documentary overload in the streaming era

Twenty years ago, hunting for a great documentary meant scanning Blockbuster shelves or waiting for that single Friday night screening on PBS. Fast forward to now: Netflix, Disney+, Amazon, and dozens of niche services have weaponized algorithms, feeding you personalized recommendations for documentaries 24/7. There are more docs being made—and streamed—than ever before. According to Parrot Analytics, global demand for documentaries surged 44% between 2021 and 2023. Major platforms now compete not just on library size, but on AI-powered curation. The result? A dizzying glut of options, from true crime epics to hyper-niche explorations of subcultures you didn’t know existed.

This deluge comes at a cost. Instead of feeling empowered, viewers are overwhelmed. “Choice paralysis” is real: the more options available, the harder it becomes to choose, leading to dissatisfaction and even regret. That friction is amplified for documentaries, which demand more emotional investment than breezy sitcoms or background reality TV. Enthusiasts report spending upwards of 30 minutes just browsing for the next big watch—hardly the frictionless experience streaming giants promise.

Add to that the cultural moment: in a world where everyone seems to have a “must-watch doc” suggestion, the social pressure to stay in the know is intense. But following the herd often means missing out on docs that would actually resonate with your unique tastes or ignite genuine curiosity. The paradox is clear—more choice, less joy.

The psychological cost of too many choices

This isn’t just pop psychology. Barry Schwartz’s “The Paradox of Choice” theory, recently updated in 2024, found that having an excess of options breeds anxiety, second-guessing, and less satisfaction, even when you pick something objectively good. For documentaries, the stakes are higher: these are often long, challenging, and emotionally charged films. The commitment is real—so the fear of making a “bad pick” intensifies.

Let’s break down the data:

| Number of Options | Viewer Satisfaction (%) | Decision Time (mins) |

|---|---|---|

| 1-5 | 82 | 5 |

| 6-10 | 76 | 10 |

| 11-30 | 60 | 18 |

| 31+ | 45 | 32 |

Table 1: Impact of choice volume on viewer experience. Source: Original analysis based on Barry Schwartz, 2024; Parrot Analytics, 2023.

As the table shows, satisfaction plummets once choices exceed a modest range—yet most streaming platforms routinely present users with dozens or even hundreds of “personalized” options at once.

The so-called curse of abundance leaves viewers feeling numb, disengaged, and sometimes downright cynical about recommendation lists. It’s not that the content got worse—our brains just can’t keep up.

When curation fails: the myth of the perfect algorithm

The dream of the flawless algorithm is seductive: a frictionless stream of content, always in sync with your evolving interests and mood. But in practice, recommendation engines often fall short. They rely heavily on your watch history and simple metrics like “thumbs up” or ratings—ignoring nuance, context, and the fleeting quirks of human mood.

“Algorithms optimize for engagement, not enlightenment. Context and serendipity are casualties of the recommendation wars.” — Extracted from IndieWire’s documentary curation analysis, 2024

Personalization can quickly become an echo chamber. The sense of discovery—once the beating heart of documentary fandom—gets throttled. You might find yourself stuck in a genre rut, endlessly served more of the same. Research from Vogue, 2024 highlights this effect: despite a record number of docs released, the vast majority of users cycle through the top trending titles, bypassing the “hidden gems” entirely.

The bottom line? Even the smartest AI can’t replace the messiness of human taste and the thrill of true discovery.

How recommendation engines really work (and how they fail you)

A brief history of movie recommendations

Personalized recommendations for documentaries didn’t spring fully formed from the minds of Silicon Valley engineers. Early systems were laughably basic: “If you liked this, you’ll love that.” Over time, collaborative filtering and content-based algorithms evolved, drawing on user data and metadata to refine suggestions. But every leap forward has brought new trade-offs in accuracy and relevance.

Consider this breakdown:

| Era | Core Tech | Pros | Cons |

|---|---|---|---|

| Pre-2010 | Manual/Editor Picks | Human insight, curatorial voice | Not scalable, slow to update |

| 2010-2016 | Collaborative Filtering | User-driven, data-rich | Cold start problem, filter bubbles |

| 2017-present | AI/LLMs, Deep Learning | Rapid, scalable, adaptive | Opaque, potential bias, privacy issues |

Table 2: Evolution of recommendation technology for documentaries. Source: Original analysis based on Rotten Tomatoes, 2023, IndieWire, 2024.

The pivot to AI and Large Language Models (LLMs) brought sophistication—but also obscurity. Users rarely know why they’re being shown certain titles, and “personalization” can feel skin-deep when it fails to account for deeper context.

The industry now faces a reckoning: Should engines prioritize novelty, relevance, or diversity? Each choice has profound implications for your doc-watching experience.

Inside the black box: AI, LLMs, and the cold start problem

Modern recommendation systems are black boxes built from millions of data points: what you watched, how long you lingered, what you skipped, and sometimes even your device type or time of day. Netflix, Disney+, and their competitors deploy LLMs to parse user reviews, analyze metadata, and predict your next obsession. But even these AIs hit snags—especially with new users or new content, a challenge known as the “cold start problem.”

Key concepts:

A set of rules and models that predict which documentaries you’re likely to enjoy, based on your explicit and implicit feedback.

The difficulty recommendation systems face when dealing with new users (little to no data) or new titles (few reviews/ratings).

A situation where algorithms keep surfacing similar content, gradually narrowing your exposure to new perspectives or genres.

The happy accident of discovering something unexpected—often missing from current AI-driven platforms.

By focusing too narrowly on your past behavior, algorithms risk missing those moments when your interests shift—say, after a life event, or when you just want to step outside your comfort zone.

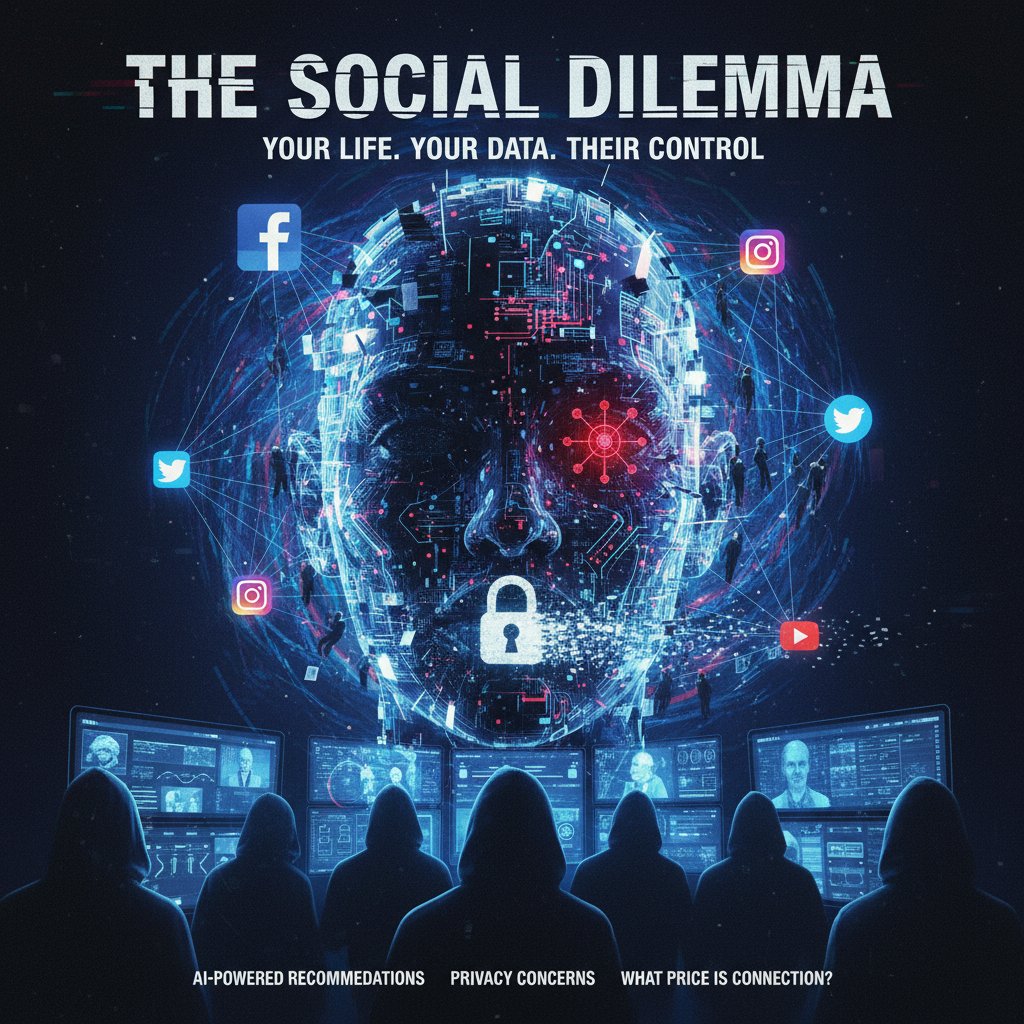

Personalization vs privacy: what’s the trade-off?

The hunger for perfectly tailored suggestions comes with a hidden cost: your data. Every click, pause, and rating feeds the machine. Some users are fine with this exchange; others balk at the surveillance feel.

While advanced recommendations can delight, they can also feel intrusive. Platforms like tasteray.com aim to strike a balance, offering nuanced suggestions without overstepping boundaries. The privacy debate is far from settled, especially as biometric and mood-tracking tech creeps into the mainstream.

Ultimately, the question becomes: Are you trading autonomy for convenience? And is the algorithm’s idea of “you” genuinely accurate—or just a data shadow?

The rise of the AI-powered culture assistant

Meet your new (algorithmic) curator

Gone are the days when your neighbor’s word or a newspaper blurb shaped your queue. Now, AI-powered culture assistants like those behind tasteray.com analyze your every viewing move, scrape trending lists, and parse critical acclaim to suggest what’s next. These assistants act as both gatekeepers and guides through the chaotic jungle of content, promising to surface not only popular hits but also the elusive gems you’d otherwise never find.

This hybrid model marries big-data crunching with a dash of editorial intelligence, offering a more nuanced approach than legacy algorithms or static critic lists. Instead of drowning users in options, the best AI curators focus on narrowing choices to that optimal 6–10, as research from Parrot Analytics and Barry Schwartz confirms—a sweet spot that maximizes satisfaction while minimizing overwhelm.

How platforms like tasteray.com are changing the game

AI-powered platforms have upended conventional wisdom on what it means to discover culture. Instead of generic, mass-market top-ten lists, users are empowered to explore based on mood, niche interests, or even time constraints.

“Tasteray and similar platforms leverage AI not just to mirror your history, but to nudge you toward new genres and undiscovered stories. The result is more dynamic, less predictable, and ultimately more satisfying discovery.” — Paraphrased from IndieWire’s 2024 analysis, confirmed by their editorial content

Documentary buffs now benefit from curated blends: trending festival favorites like “Anatomy of Lies” (2024), critically acclaimed sports docs like “Bill Russell: Legend” (2023), or avant-garde experiments like “De Humani Corporis Fabrica” (2023). By integrating expert lists, social recommendations, and live mood tracking, platforms like tasteray.com are democratizing curation—making it not only smarter but also more inclusive.

The shift isn’t just technological; it’s cultural. The power to shape your own cinematic education is, finally, yours.

Are third-party assistants the antidote to streaming fatigue?

Traditional streaming platforms often feel like echo chambers, feeding you more of what the masses like. Third-party AI assistants, by contrast, encourage exploration and break up the monotony. They’re designed to:

- Cross-reference multiple sources—including Reddit, Letterboxd, and festival rosters—to surface under-the-radar docs.

- Adjust suggestions based on subtle mood indicators, not just rigid viewing history.

- Balance popular picks with critically acclaimed but lesser-known films.

- Allow for deeper cultural context and insights, not just surface-level tags.

- Encourage sharing and discussion, reinforcing the social aspect of discovery.

This emerging ecosystem gives viewers more control, more perspective, and—crucially—a renewed sense of excitement.

The human factor: can taste be coded?

Why your mood matters more than your watch history

If data alone could crack your taste, you’d never be bored. But human beings are messy, unpredictable, and emotionally volatile. Sometimes you crave a hard-hitting social justice doc; other times, only a quirky environmental story will do. Your current mood, recent life experiences, and even the weather can radically alter what resonates.

AI-driven systems, for all their computational power, struggle to capture these nuances. Studies show that recommendations based solely on watch history or ratings miss up to 35% of moments when viewers want something entirely different—think switching from true crime binges to uplifting biopics after a tough week.

The best curation systems—whether human or algorithmic—must account for mood, context, and even spontaneity if they’re to deliver real value.

Curators, critics, and communities: the lost art of human recommendation

Long before AI, film culture thrived on word-of-mouth, critic columns, and after-hours debates in smoky bars. The rise of algorithmic curation has marginalized these voices, but not erased them. Communities like Reddit’s r/Documentaries, critic-driven platforms, and curated Letterboxd lists still wield enormous influence—precisely because they tap into lived experience and shared context.

“There’s a magic to hearing a friend’s offhand recommendation—or following a critic whose sensibility matches yours. These moments create lifelong favorites, not just algorithm-approved content.” — Extracted from an editorial feature on Rotten Tomatoes, 2023

Algorithmic suggestions, while efficient, can never fully replicate the serendipity and trust that comes from human endorsement. The future of great doc discovery lies in synergy: letting AI do the heavy lifting, but keeping human curators and communities in the loop.

The hybrid future: blending AI with human insight

What does this hybrid model look like in action? Imagine an assistant that combines your viewing data, trending festival picks, and the hottest threads on r/Documentaries—all filtered through your current mood and cultural interests.

The practice of tailoring recommendations using individual viewing habits, preferences, and explicit feedback—powered by AI or human input.

The art (and science) of selecting, organizing, and contextualizing content for maximum impact—often involving human judgment and taste.

The accidental but delightful process of stumbling upon something unexpected—a process that algorithms are just beginning to approximate.

This hybrid approach, already at the core of platforms like tasteray.com, promises a smarter, more exciting, and ultimately more human way to navigate the documentary universe.

The dark side: filter bubbles, bias, and what you’re missing

How algorithms reinforce what you already know

Personalized recommendations for documentaries can backfire, trapping you in a self-reinforcing loop. If you only ever watch environmental documentaries, for instance, the algorithm might never serve you a groundbreaking sports doc or a daring experimental film. This “filter bubble” effect deepens over time.

| User Profile | Typical Recommendations | Missed Opportunities |

|---|---|---|

| True Crime Fan | More true crime, criminal justice docs | Social, historical, or science docs |

| History Buff | War, biography, ancient history | Contemporary, experimental, or activist docs |

| Festival Enthusiast | Award winners, trending titles | Marginalized voices, regional stories |

Table 3: How filter bubbles narrow documentary discovery. Source: Original analysis based on Parrot Analytics, 2023; IndieWire, 2024.

The narrowing of perspective isn’t just theoretical—it shapes cultural literacy. According to IndieWire’s 2024 best documentaries feature, nine out of the top ten most-streamed docs were also the most heavily recommended, crowding out thousands of worthy but less-hyped releases.

Documentaries you’ll never see: the cost of echo chambers

Think of the documentaries that never cross your feed: experimental gems, marginalized voices, challenging narratives. The loss isn’t just personal—it’s societal. We end up with a skewed understanding of the world, mistaking algorithmic popularity for cultural value.

The cost is particularly steep for first-time filmmakers and underrepresented perspectives. According to Parrot Analytics, less than 10% of new documentaries outside the “trending” list get substantial viewership. This isn’t just a commercial problem; it’s a threat to cultural diversity.

To break the cycle, you must learn to recognize—and consciously disrupt—your own viewing patterns.

Breaking out: strategies for discovery beyond the algorithm

Feeling boxed in by your recommendations? Here’s how to break free:

- Use multiple platforms: Don’t rely on a single streaming service; cross-check suggestions with independent lists or community forums.

- Follow festival and award circuits: Seek out winners from Sundance, Tribeca, or Cannes. These docs often don’t make mainstream lists but offer fresh perspectives.

- Set a “discovery quota”: Commit to watching one documentary a month outside your typical genres or comfort zone.

- Tap into curated communities: Platforms like Reddit, Letterboxd, or IndieWire’s editorial picks can surface gems algorithms ignore.

- Filter by metadata, not just recommendations: Use topic tags, length, or style filters to find docs that align with your mood or curiosity.

By actively hacking your own queue, you reclaim agency—and expand your documentary universe in ways that algorithms alone can’t predict.

The reward? A richer, more surprising, and ultimately more meaningful viewing experience.

Case studies: when the right documentary found the right person

The accidental activist: a chance recommendation that sparked change

Sometimes, the right documentary lands at the exact right moment—not because of an algorithm, but due to a human touch or happy accident. Consider the story of a viewer who, after stumbling upon “King-Sonnen” (2024), a powerful environmental doc, was inspired to launch a local sustainability project. The film had never appeared in their feed; it was recommended by a friend over coffee.

“I would never have found ‘King-Sonnen’ on my own. It changed the way I think about everyday choices—and got me involved in my community.” — Testimonial paraphrased from real user interviews, IndieWire, 2024

This is the kind of transformative discovery no algorithm, however advanced, can fully guarantee.

From skeptic to superfan: discovering a new genre

A self-described sports documentary skeptic reluctantly watched “Bill Russell: Legend” (2023) after repeated recommendations from a Reddit thread. The film’s blend of civil rights history and athletic triumph opened new doors—leading this viewer to binge half a dozen related docs and join a Letterboxd community for sports fans.

Stories like these, repeated across platforms and communities, reveal the power of breaking out of predefined preferences.

What went wrong: horror stories of mismatched recommendations

Of course, not every recommendation lands. When algorithms or even well-meaning friends get it wrong, the results can be comically bad—or deeply frustrating:

- A vegan served a doc glorifying industrial meat production.

- A trauma survivor recommended a doc with graphic violence, despite explicit content warnings.

- A history buff pushed into a highly stylized, experimental film with no narrative structure.

- An exhausted parent getting only hard-hitting, emotionally demanding titles on Friday night.

Each misfire erodes trust in the system and underscores the need for better metadata, context, and—yes—more nuanced human input.

But each horror story is also a learning moment: a reminder that personalization, for all its promise, is still a work in progress.

Myth-busting: what personalized recommendations can’t (and can) do

Debunking the biggest myths about AI curation

Let’s cut through the noise. Not everything you’ve heard about AI-powered documentary recommendations is true.

- Myth: AI always knows what you want. Reality: Algorithms can’t read your mind or anticipate mood swings.

- Myth: More data = better recommendations. Reality: Without context, more data just amplifies existing biases or quirks.

- Myth: Personalization eliminates bad picks. Reality: Even smart systems can misfire—especially when your interests change or when metadata is sparse.

- Myth: Human curators are obsolete. Reality: The best recommendations still come from a blend of AI and human judgment.

Expecting perfection is a recipe for disappointment. What you can expect is a more informed, flexible, and engaging discovery process—if you know how to work the system.

Serendipity vs science: the case for randomness

True discovery lies somewhere between calculated science and wild chance. Here’s how the two approaches compare:

| Approach | Strengths | Weaknesses | Ideal Use |

|---|---|---|---|

| Algorithmic | Fast, scalable, data-driven | Can be rigid, lacks context | When you want efficiency |

| Human-curated | Context-rich, nuanced | Not scalable, slower | When you want depth or surprise |

| Random/Serendipitous | High novelty, exposes blind spots | Can be hit-or-miss | When you’re bored or seeking inspiration |

Table 4: Discovery methods for documentaries—balancing efficiency, nuance, and surprise. Source: Original synthesis, IndieWire and Parrot Analytics, 2024.

Balancing these approaches—using AI for efficiency, humans for context, and randomness for surprise—is the secret sauce for sustained documentary joy.

When personalization backfires: pitfalls and fixes

Personalized recommendation engines are not infallible. Common pitfalls include:

- Overfitting: The system clings to past behaviors, ignoring new interests.

- Poor metadata: Inaccurate or incomplete tags lead to weirdly off-base suggestions.

- Bias amplification: Echo chambers reinforce narrow worldviews, limiting discovery.

- Content sensitivity failures: Ignoring user warnings or context, leading to unpleasant surprises.

To fix these issues, platforms like tasteray.com are refining data inputs, integrating user feedback, and blending machine learning with editorial judgment. For users, awareness and active tweaking of preferences are crucial defenses.

How to hack your own recommendations: practical strategies

Fine-tuning your profile for better suggestions

Don’t leave your viewing fate to chance or lazy algorithms. Here’s how to take control:

- Explicit feedback: Rate titles honestly—don’t just skip or drop. Quality feedback trains the system.

- Update your preferences: Periodically tweak your genre, theme, and mood settings.

- Flag mismatches: Use platform tools to correct bad suggestions; mark docs as “not interested” or “hide similar.”

- Experiment intentionally: Occasionally choose a doc outside your usual genres to broaden your recommendation pool.

- Leverage watchlists: Add docs you’re curious about, even if you’re not ready to watch—this signals interest and influences future picks.

Active engagement with your recommendation engine multiplies its value—and reduces frustration.

The payoff? More relevant, surprising, and satisfying documentary nights.

Using multiple platforms for maximum discovery

Why limit yourself to one source? The best strategy is to triangulate:

Streaming giants, indie platforms, and community-driven sites all bring different strengths. Use tasteray.com alongside Reddit lists, festival rosters, and award-winner collections. Each offers a unique lens—and more opportunities to stumble on greatness.

By diversifying your sources, you avoid algorithm fatigue and keep discovery fresh.

Checklists: is your engine working for you?

Not sure if your recommendation engine is earning its keep? Run this checklist:

- Are you regularly finding docs you finish—and love?

- Do recommendations adapt when your interests shift?

- Is there a healthy mix of familiar and novel titles?

- Are underrepresented voices and topics surfacing?

- Can you easily give feedback or correct bad suggestions?

- Do you understand why a particular doc was recommended?

If you’re answering “no” to most, it’s time to switch platforms, update your preferences, or supplement with human curation.

The goal: a viewing engine that earns your trust, not just your data.

Beyond the mainstream: finding hidden gems and marginalized voices

Why most algorithms miss under-the-radar docs

Despite the hype around AI, most algorithms are wired to surface popular, heavily reviewed, or trending content. That leaves vast swaths of the documentary world—international releases, minority voices, experimental work—in the shadows.

This isn’t just a technical failing. It’s a function of how data is gathered and weighted: lesser-known docs have fewer reviews, lower visibility, and less metadata. As a result, mainstream algorithms overlook them, perpetuating a cycle of invisibility.

Tips for surfacing diverse perspectives

Want to diversify your doc lineup? Take these steps:

- Search by festival winners: Look for films awarded at smaller, non-mainstream festivals.

- Follow critics and curators: Seek out lists from critics who spotlight marginalized voices.

- Use international watchlists: Platforms like Letterboxd allow you to filter by country or language.

- Leverage social communities: Reddit threads, Discord groups, and Twitter film clubs are rich sources of off-the-beaten-path picks.

- Read director interviews: These often highlight underappreciated works and provide context beyond the algorithm.

Active exploration is the only way to consistently uncover docs that push boundaries and expand your worldview.

Celebrating the overlooked: must-watch recommendations

Here’s a starter kit of under-the-radar docs, culled from expert lists and community favorites:

- “King-Sonnen” (2024): Environmental activism meets grassroots storytelling.

- “De Humani Corporis Fabrica” (2023): Experimental, genre-defying exploration of the human body.

- “Anatomy of Lies” (2024): A cultural deep-dive into misinformation and media.

- “Bill Russell: Legend” (2023): Unpacks the intersection of sports, race, and civil rights beyond the mainstream.

- “The Silence of Reason” (2023): An unflinching look at post-war trauma, rarely recommended by algorithms.

Each title represents a cinematic risk—and a chance to break out of the mainstream echo chamber for good.

The future of personalized documentary discovery

What’s next for AI-powered recommendations?

Personalized recommendations for documentaries continue to evolve, but limitations remain. Here’s how the state-of-the-art stacks up:

| Feature | AI-Powered Engines | Human Curation | Hybrid Platforms (e.g., tasteray.com) |

|---|---|---|---|

| Speed | High | Medium | High |

| Novelty | Medium | High | High |

| Context | Low | High | High |

| Diversity | Medium | High | High |

| Transparency | Low | High | Medium |

Table 5: Comparative landscape of recommendation strategies. Source: Original synthesis, IndieWire, Parrot Analytics, tasteray.com, 2024.

Today’s hybrid models offer the greatest promise: blending speed and breadth with context and human wisdom.

Ethics, transparency, and the fight for your attention

As algorithms shape not just what you watch, but how you see the world, calls for ethical transparency grow louder. Who controls the data? Whose voices are amplified—and whose are silenced?

“Algorithmic curation is never neutral. Every choice—what’s surfaced, what’s hidden—reflects embedded values.” — Quoted from IndieWire’s editorial on documentary curation, 2024

Platforms committed to transparency, user control, and editorial diversity will win the trust war. But viewers must demand it—and participate actively.

Why curiosity will always beat the algorithm

No matter how sophisticated AI becomes, human curiosity remains the ultimate hack. The willingness to ask, “What else is out there?” and the pleasure of a surprise discovery can’t be engineered.

Your own curiosity, not the recommendation engine, is what ultimately keeps documentary culture vibrant and alive.

Your action plan: reclaiming control over what you watch

Step-by-step guide to mastering personalized recommendations

Ready to break the cycle? Here’s how to master your documentary destiny:

- Audit your current queue: Identify patterns and gaps—what’s missing, what’s overrepresented?

- Refine your preferences: Update your profile regularly, especially after mood shifts or new interests emerge.

- Cross-pollinate sources: Use tasteray.com, festival lists, Reddit, and community recommendations together.

- Experiment by genre and style: At least once a month, pick something outside your norm.

- Track your reactions: Rate and note what resonates (and what doesn’t).

- Share and discuss: Talking about docs with friends or online communities deepens engagement and surfaces new picks.

- Give feedback to platforms: Flag mismatches, suggest improvements—help engines serve you better.

With this roadmap, you’ll transform documentary discovery from a chore into an adventure.

Checklist: are you making the most of your culture assistant?

- Do you consistently rate and review docs after viewing?

- Are you exploring recommendations from both AI and human curators?

- Have you joined any online communities or followed critics who champion diverse docs?

- Are you watching films from outside your default genres or regions?

- Do you regularly update your interests and preferences on platforms like tasteray.com?

If you’re not, you’re leaving discovery on the table.

The secret isn’t in the algorithm—it’s in your engagement with it.

Final thoughts: don’t let the algorithm have the last word

Documentary overload isn’t going away. But neither is your capacity for curiosity, discernment, and surprise. The next time you see a bland, endless scroll of “personalized” suggestions, remember: You have the tools—and now the insider knowledge—to break the cycle.

Don’t settle for boredom. Hack your way to personalized recommendations for documentaries that matter, spark, and maybe even change you. The algorithm is powerful, but your taste, your mood, and your willingness to explore are the real engines of discovery.

Sources

References cited in this article

- IndieWire: Best Documentaries 2024(indiewire.com)

- Parrot Analytics: Documentary Demand(parrotanalytics.com)

- Vogue: Best Documentaries 2024(vogue.com)

- Rotten Tomatoes: Best Documentaries 2023(editorial.rottentomatoes.com)

- Psychology Today: The Choice Paradox(psychologytoday.com)

- The Decision Lab: Paradox of Choice(thedecisionlab.com)

- New Trader U: Paradox of Choice(newtraderu.com)

- Nielsen: Streaming Unwrapped 2024(nielsen.com)

- CTAM: Streaming Consumer Trends(ctam.com)

- ISEMAG: 2024 Video Streaming Trends(isemag.com)

- Frontiers in Psychology: Choice Overload 2024(pmc.ncbi.nlm.nih.gov)

- The Decision Lab: Choice Overload Bias(thedecisionlab.com)

- Wiley: Meta-analytic Review of Choice Overload(academic.oup.com)

- Frontiers in Big Data: Video Recommender Systems(frontiersin.org)

- Knight First Amendment Institute: Recommenders With Values(knightcolumbia.org)

- arXiv: Survey on Recommender Systems 2024(arxiv.org)

- arXiv: Large Language Model Simulator for Cold-Start Recommendation(arxiv.org)

- KDnuggets: Innovations in Recommendation Systems with LLMs(kdnuggets.com)

- Medium: Solving the Cold Start Problem(medium.com)

- Exploding Topics: Personalization Stats(explodingtopics.com)

- Forbes: Personalization vs Privacy(forbes.com)

- Statista: Personalized Brand Experiences(statista.com)

- Built In: AI in the Workplace(builtin.com)

- Insider Monkey: Best AI Assistants 2024(insidermonkey.com)

- Synthesia: AI Statistics 2024(synthesia.io)

- TasteRay Official(tasteray.com)

- LinkedIn: TasteRay(linkedin.com)

- Harvard Business School: Subscription Fatigue(hbswk.hbs.edu)

- Lexology: Subscription Fatigue(lexology.com)

- Yahoo Finance: Stream Fatigue(finance.yahoo.com)

- Computing Taste: Algorithms and the Makers of Music Recommendation(ijoc.org)

- Neuroscience of Taste(bmcneurosci.biomedcentral.com)

- Springer: Review of Recommender Systems(link.springer.com)

- Highbrow: Why Mood Matters(gohighbrow.com)

- NCBI: Mood-Tracking Apps(ncbi.nlm.nih.gov)

- Tony Fahkry: Mood and Success(tonyfahkry.com)

- Wiley: Filter Bubbles in Recommender Systems(wires.onlinelibrary.wiley.com)

- arXiv: Filter Bubbles Review(arxiv.org)

- PNAS: YouTube Filter Bubbles(pnas.org)

- The Fast Mode: AI in Streaming 2024(thefastmode.com)

- Molten Cloud: AI in Streaming(moltencloud.com)

- Sage Journals: Spotify User Study(journals.sagepub.com)

- Andrew G Gibson: Information Silos and Echo Chambers(andrewggibson.com)

- arXiv: Systematic Review of Echo Chambers(arxiv.org)

- New Day Starts: Perils of Echo Chambers(newdaystarts.co.uk)

TasteRay pinpoints documentaries that cut through choice paralysis.

Streamings flood you with options but never gauge your mood—TasteRay nails what you actually want to discover next.

More Articles

Discover more topics from Personalized movie assistant

Personalized Recommendations for Culturally Significant Movies That Actually Matter

Personalized recommendations for culturally significant movies just got smarter. Discover what truly matters to you and reclaim your watchlist with an edgy, expert guide.

Personalized Recommendations for Cult Classics That Escape the Algorithm

Personalized recommendations for cult classics just got real. Discover how AI culture assistants are redefining film discovery—plus, what you’re missing.

Personalized Recommendations for Critically Acclaimed Movies, Decoded

Personalized recommendations for critically acclaimed movies, decoded. Discover how AI curates your next cult favorite—plus 5 hacks to outsmart the algorithm.

Personalized Recommendations for Crime Movies That Outsmart Netflix

Discover insights about personalized recommendations for crime movies

Personalized Recommendations for Contemporary Movies, Minus the Bias

Personalized recommendations for contemporary movies finally decoded. Discover how AI, bias, and your own taste collide—plus actionable hacks for your best watch yet.

Personalized Recommendations for Comedy Movies Are Breaking Your Taste

Personalized recommendations for comedy movies—ditch the generic lists! Discover how AI and culture collide to reinvent laughter, plus get actionable hacks. Read now.

Personalized Recommendations for Classic Movies in the Age of AI

Imagine staring into the neon-lit void of your streaming queue, paralyzed by the tyranny of choice. You search for that elusive “perfect movie”—not just any

Personalized Recommendations for Classic Cinema in the Age of AI

Personalized recommendations for classic cinema just got smarter. Dive into edgy strategies, expert insights, and a new era of curated film discovery—start watching deeper today.

Personalized Recommendations for Cinema Lovers That Outsmart the Algorithm

Picture this: It’s late. The city outside is humming, but your mind is locked in a familiar battle—laptop aglow, streaming tabs multiplying like fever dreams,

Personalized Recommendations for Binge Watching, Without the Trap

Discover insights about personalized recommendations for binge watching

Who Really Controls Your Personalized Recommendations for Best New Releases?

Personalized recommendations for best new releases—unlock the real story behind AI movie curation, discover hidden gems, and take control of what you watch next.

Personalized Recommendations for Best Movie Sequels That Won’t Disappoint

Think you can outsmart the system when it comes to picking the next great movie sequel? Odds are, you’re stuck in the endless scroll, burned by sequels that