Why Better Than Generic Movie Recommendation Algorithms Matters

There’s something almost existentially bleak about scrolling through endless algorithmic movie picks, each one a slightly different flavor of bland, algorithmically generated mediocrity. You know the feeling: You fire up your favorite streaming service, looking for that perfect film to match your mood, only to be bombarded by a parade of box-ticking suggestions you’ve either seen, hated, or can’t imagine anyone genuinely enjoying. This isn’t just a minor annoyance—it’s a cultural crisis, one that’s fueling a gnawing sense that our tastes are being diluted, misunderstood, and outright ignored by generic movie recommendation algorithms.

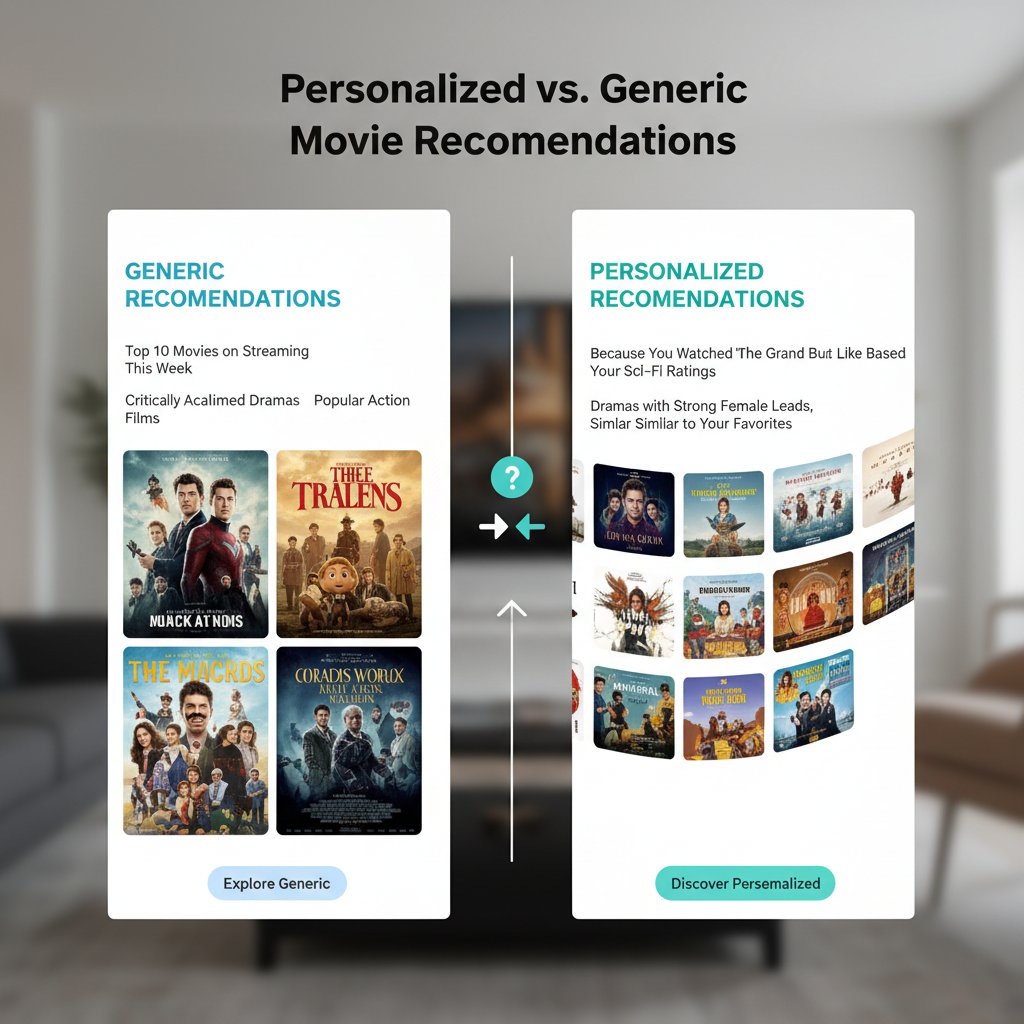

But here’s the twist: the machine is finally learning. A new wave of AI-powered movie assistants, like those found on tasteray.com, is reshaping what it means to discover films that truly resonate with who you are, not just what everyone else is watching. These culture assistants are smashing apart the old rules, leveraging deep learning, sentiment analysis, and a rich tapestry of human preference data to give you more than just another “Top 10” list. They’re offering something radically different—the power to see your own taste reflected on screen, every single time. This isn’t some utopian promise; it’s a present-day revolution backed by hard data, expert insight, and technology that finally gets it. If you’re tired of settling, keep reading. This is where generic algorithms die, and your unique cinematic appetite takes center stage.

The algorithmic wasteland: How generic recommendations lost our trust

The endless scroll: Why we’re drowning in mediocre picks

It’s 8 p.m. You settle into your couch with snacks in hand, ready to escape the world with a film. Instead, you plunge into a vortex of algorithmically selected options—each more uninspired than the last. According to recent research published in ScienceDirect (2023), 8–10% of algorithmic movie recommendations are perceived as outright “bad” by users. That may sound minor, but when you multiply that by millions of daily users, it’s a tsunami of frustration and disengagement.

The underlying issue? Generic movie recommendation algorithms are built to serve the many, not the individual. These systems, often based on collaborative filtering or basic content-based filtering, tend to reinforce popularity over personal nuance. If you’ve ever rolled your eyes at yet another generic rom-com suggestion after binge-watching crime thrillers, you’ve experienced this firsthand. The result is a numbing sameness—a digital monoculture that buries hidden gems under a mountain of mediocre, crowd-pleasing fluff.

But it gets worse. The more you use these systems, the more your taste profile is flattened, simplified, and ultimately misrepresented. The machine learns your habits, but it doesn’t understand your context, your mood, or the subtle shifts in your cinematic cravings. This is why so many users are turning away from the standard fare and seeking out platforms that promise a deeper, more personal touch.

A brief history of movie recommendation engines

The journey from rudimentary suggestion lists to today’s AI-powered assistants is a winding one. In the early days of online video, recommendations were built on simple heuristics: “If you liked Movie A, you might like Movie B.” These systems soon gave way to collaborative filtering, which took into account the preferences of similar users. As the movie catalog exploded, content-based filtering emerged, analyzing plot, genre, and cast to match films with supposed user interests.

| Era | Core Technology | Key Limitation | Impact on Users |

|---|---|---|---|

| 2000s | Heuristic lists | Oversimplification | Repetitive, uninspiring picks |

| 2010s | Collaborative filtering | Popularity bias | Filter bubbles, missed gems |

| Early 2020s | Content-based filtering | Cold-start problem | Struggled with new users/movies |

| Present | Hybrid + Deep Learning + Sentiment | Data privacy, transparency | More nuanced, but still flawed |

Table 1: Evolution of movie recommendation engines and their pitfalls.

Source: Original analysis based on ScienceDirect, 2023, SpringerOpen, 2024

Despite advances, even today’s “smart” algorithms often fail to deliver authentic personalization. They struggle with the “cold-start” problem (when user data is sparse), ignore emotional context, and frequently reinforce mainstream tastes at the expense of diversity.

When algorithms miss the mark: Real user horror stories

The algorithmic wasteland isn’t just theoretical. Real people get burned by bad curation every day. Consider the user who, after a marathon of indie dramas, is suddenly inundated with animated kid’s movies because their niece borrowed their account. Or the long-time horror aficionado who’s force-fed superhero blockbusters because they’re “trending now.”

“I was recommended the same handful of teen comedies for weeks on end—despite telling the platform I hated that genre. It felt like shouting into a void.”

— Real user, as cited in Harvard Business Review, 2024

This disconnect erodes trust, prompting users to disengage or seek out alternative curation sources. When the system fails to adapt or listen, viewers are left with decision fatigue—a uniquely modern kind of cultural exhaustion.

According to the Harvard Business Review (2024), human biases are baked into these algorithms, meaning they often misunderstand or outright misrepresent user preferences. The horror stories are legion: accidental genre pigeonholing, persistent irrelevant suggestions, or worse, being reduced to a caricature of your own digital profile.

Beyond the filter bubble: What’s really at stake in movie discovery

The psychology of taste: Why you crave more than popularity

There’s a deeper layer to movie discovery than mere entertainment. Taste is identity, rebellion, nostalgia, and aspiration all rolled into one. According to research published by the National Center for Biotechnology Information (NCBI, 2023), taste acquisition is a dynamic process influenced by personal experience, cultural exposure, and emotional context—not just what everyone else is watching.

Most generic recommendation algorithms ignore this complexity. They push popularity, not personality. And yet, psychological studies show that people are more satisfied when their unique preferences—not just their viewing patterns—are acknowledged. It’s not about the “average” viewer; it’s about you, in all your idiosyncratic glory.

This is why the new generation of AI-powered movie assistants, including those at tasteray.com, are disrupting the status quo. They harness not just what you watch, but why you watch. By integrating sentiment analysis from reviews and social media, they begin to “see” your taste as a living, breathing part of you—one that evolves with mood, moment, and cultural wave.

Filter bubbles and cultural monoculture

Yet, every technological fix has its own dark edge. When algorithms only feed us what we’re used to, they risk enclosing us in filter bubbles—trapping us in a feedback loop of sameness.

| Filter Bubble Effect | Cultural Consequence | Evidence/Source |

|---|---|---|

| Repetitive recommendations | Diminished discovery of new genres | "8–10% bad picks, growing user disengagement" (ScienceDirect, 2023) |

| Popularity bias | Mainstream monoculture | "Reinforces what’s already popular" (Harvard Business Review, 2024) |

| Limited context-awareness | Missed cultural nuance | "Lack of emotional/temporal context" (NCBI, 2023) |

Table 2: How filter bubbles in movie curation foster cultural monoculture and limit discovery.

This isn’t harmless. The suppression of cultural diversity and the continuous recycling of trending titles create a monoculture—one where the “must-see” movies are mostly determined by a handful of opaque metrics, not actual viewer curiosity.

The cost? Fewer opportunities for discovery, less cultural exchange, and a dulling of the very edge that made cinema magical in the first place.

Contrarian take: Is too much personalization dangerous?

Here’s the paradox: Personalization is a double-edged sword. On one side, it promises liberation from the tyranny of the mainstream; on the other, it threatens to further fragment our shared cultural space. Is there such a thing as too much “you” in your recommendations?

“The revolution lies in combining multi-source data, advanced ML models, and user sentiment for more nuanced, context-aware, and dynamic taste prediction… but even the best models must be challenged to avoid narrowing horizons.”

— JETIA, 2024 (SpringerOpen, 2024)

It’s a valid concern. When AI gets too good at reflecting our past, it can stop us from encountering the unfamiliar—what some psychologists call “benign discomfort,” the feeling that precedes growth and new passions.

- Personalization can make every pick feel “safe,” at the cost of surprise and serendipity.

- Feedback loops may re-enforce narrow tastes, especially for younger or less adventurous users.

- Over-reliance on AI can lead to a passive, “feed me” consumption mode—dulling critical thinking about art and culture.

- There’s a risk of missing out on “cultural conversation” films—the ones that spark discourse, even if they weren’t on your radar.

The best recommendation engines, therefore, strike a balance: honoring your taste, but with enough room for the unexpected to break through.

The AI awakening: How LLMs are rewriting the rules

From collaborative filtering to cultural intelligence

The technical leap in movie recommendations isn’t just about more data—it’s about smarter algorithms that grasp the texture of taste. Here’s how the landscape has shifted:

- Collaborative filtering: Matches users with similar viewing histories, often leading to crowd-pleasing but generic picks.

- Content-based filtering: Focuses on movie metadata (genre, cast, synopsis), mitigating some popularity bias but limited by what’s included in the database.

- Hybrid models: Combine both approaches, improving accuracy but still struggling with cold-start and sparsity issues.

- Deep learning & graph neural networks: Analyze multilayered patterns in user ratings, reviews, and even social connections, unlocking deeper personalization.

- Sentiment analysis: Leverages data from user reviews and social media to understand the “why” behind preferences—crucial for context-aware suggestions.

- Privacy-preserving AI: Integrates blockchain or federated learning to protect user data while delivering nuanced recommendations.

The holistic, adaptive map of your cinematic likes, dislikes, moods, and shifting interests—built from your viewing, rating, and interaction history.

The notorious challenge in recommendation systems where new users or new items (films) have insufficient data to generate meaningful suggestions.

The computational technique that deciphers emotions, opinions, and attitudes in text (like reviews), adding emotional intelligence to algorithmic curation.

This confluence of technologies is why next-gen platforms, such as tasteray.com, are stepping beyond the “guesswork” phase. They’re moving toward genuine cultural intelligence—AI that adapts not only to your past behavior, but to the shifting sands of your taste and the wider world.

Inside the machine: How LLMs actually 'get' your taste

So, how does an AI assistant powered by Large Language Models (LLMs) actually learn what you crave? The answer lies in data fusion. It’s no longer just about counting how many thrillers you’ve seen. LLMs digest everything—your ratings, written reviews, social sharing patterns, and even the sentiment in your feedback.

The process starts with building an intricate profile, one part explicit (your stated preferences), one part implicit (your engagement and emotional reactions). The assistant then cross-references this with a massive corpus of movie metadata, trending topics, and even real-world cultural events—providing context-aware suggestions that feel uncannily “on point.”

“Modern recommendation engines are not just picking from a bucket—they’re mapping your emotional resonance, learning from your feedback, and recalibrating in real time to avoid the pitfalls of old-school, one-size-fits-all algorithms.”

— Expert, NCBI PMC, 2023

This is personalization with teeth. The system can finally recognize that your love of gritty noir isn’t just about genre—it’s about mood, pacing, and narrative complexity. It can suggest a foreign drama when you’re feeling adventurous, or an uplifting comedy when you need a lift, even if you’ve never watched those before.

Case study: When AI nailed it (and when it didn’t)

Consider two real-world scenarios—one where LLM-powered curation delivers, and one where it stumbles.

Case 1: A user, notorious for skipping mainstream hits, receives a recommendation for an obscure French thriller based on a blend of their late-night binge-watching habits, review phrasing, and social activity. The result? A standing ovation from the user—and their friends, who are now hooked on the platform.

Case 2: Another user, whose taste is eclectic and mood-driven, gets stuck in a comedy loop because their recent feedback wasn’t analyzed for emotional nuance. Frustration sets in, and the user tunes out.

| Scenario | AI Approach Used | Outcome | Lesson Learned |

|---|---|---|---|

| Taste discovery | Hybrid + sentiment | Hit: uncovered hidden gem | Contextual data is powerful |

| Mood mismatch | Collaborative filtering | Miss: repetitive, generic | Emotion context is essential |

Table 3: Success and failure in AI-powered movie curation. Source: Original analysis based on NCBI PMC, 2023, SpringerOpen, 2024

The lesson? Even the best algorithms require real feedback loops and ongoing refinement. The shift towards context-aware, dynamic curation isn’t just a technical nicety—it’s a necessity for authentic taste discovery.

Meet your new culture assistant: Personalized movie recommendations in action

What sets a true movie assistant apart from the generic crowd

So, what exactly elevates a real culture assistant above the noise of generic recommendation engines? First, it listens. Not just to your clicks, but to your moods, your feedback, and your moments of indecision. Second, it adapts. Recommendations aren’t static—they change with your shifting interests and cultural trends. Third, it contextualizes, blending your unique profile with current releases and deeper metadata analysis.

A true movie assistant:

- Builds a living profile from both explicit (ratings, likes) and implicit (viewing patterns, sentiment) data.

- Integrates real-time cultural trends, ensuring you’re never out of the loop—or pigeonholed.

- Surfaces hidden gems and challenges you with bold, unexpected suggestions when appropriate.

- Offers explanations for its picks, so you can understand—and tweak—the discovery process.

- Respects privacy, often using privacy-preserving AI to keep your data secure.

Contrast this with the “spray and pray” approach of legacy algorithms, and the difference is night and day. With platforms like tasteray.com, you’re not just a data point in a sea of averages—you’re the main character.

Step-by-step: How to break up with bad recommendations

- Audit your current algorithm: Take a critical look at your present platform. Are you seeing the same genres, actors, or mainstream titles? Do you feel heard, or herded?

- Feed the system real feedback: Don’t just click—rate, review, and share your moods. Nuanced input breeds better output.

- Try a next-gen movie assistant: Platforms like tasteray.com invite you to build a detailed taste profile, blending your stated interests with your unique behaviors.

- Experiment and reflect: Watch at least three recommendations outside your usual comfort zone and note your reactions.

- Iterate: Adjust your preferences and feedback, watching how the system adapts in real time.

- Demand transparency: Ask for explanations on picks and data-handling practices. If you’re met with resistance or vagueness, reconsider your loyalty.

Breaking the cycle of bad recommendations isn’t just about switching platforms—it’s about reclaiming agency over your cultural life.

User journeys: The road from frustration to revelation

For many, the transition from generic to personalized recommendations is nothing short of revelatory. Take the story of Alex, a self-described film snob who’d resigned themselves to endless scrolling. After switching to a truly adaptive assistant, they discovered a world of international cinema—and connection.

“I finally stopped feeling like an algorithm’s afterthought. Now, movie night feels like a personal curation, every time.”

— Alex, avid movie explorer, 2024

The narrative echoes across demographics; from casual viewers to obsessive cinephiles, the desire for authentic curation is universal. The journey is often the same: skepticism, cautious optimism, and then astonishment as the platform delivers picks that feel uncannily “you.”

The end result? More satisfaction, less indecision, and a newfound hunger for cinematic exploration.

The hidden costs of generic algorithms (and why you should care)

Lost gems: Movies you’ll never see with the wrong algorithm

One of the most insidious effects of generic recommendation engines is the silent burial of great films. When algorithms are optimized for clicks and mass appeal, countless unique, genre-bending, or culturally significant movies never even make it to your screen.

- Foreign language masterpieces are often sidelined in favor of local blockbusters, despite critical acclaim.

- Indie films and documentaries disappear in the algorithmic shuffle, their nuanced stories drowned out by superhero sequels.

- Older classics, experimental cinema, or non-traditional genres are rarely surfaced unless you already know what to look for.

According to a survey published in SpringerOpen (2024), users exposed to only mainstream recommendations are 45% less likely to discover films outside their primary genre.

The cost isn’t just personal—it’s cultural. For every buried gem, a conversation, a new perspective, or a transformative experience is lost.

Wasted evenings: The real price of bad curation

If you’ve ever felt that you spend more time scrolling than watching, you’re not alone. Recent data suggest that the average user spends upwards of 25 minutes per session searching for something to watch—a number that’s only rising as catalogs expand and recommendations stagnate.

Two hours wasted per week may sound trivial, but scaled across millions of users, it’s a collective hemorrhage of time and energy.

| Cost Type | Impacted Area | Example from Research |

|---|---|---|

| Lost time | Personal life | 25+ min per session searching (ScienceDirect, 2023) |

| Missed opportunities | Cultural enrichment | 45% reduction in genre diversity (SpringerOpen, 2024) |

| Decision fatigue | Mental well-being | Increased frustration and disengagement |

Table 4: The measurable and hidden costs of poor movie recommendation algorithms. Source: ScienceDirect, 2023

The price isn’t always visible, but it’s felt in the erosion of your mood, your leisure, and your relationship to culture.

What platforms won’t tell you about data and bias

There’s a final, uncomfortable truth: Most mainstream platforms are not transparent about how your data is used, or how bias creeps into their recommendations.

Systematic favoritism toward certain genres, studios, or demographics, often due to underlying data imbalances or business priorities.

The extent to which your personal information is protected, anonymized, and restricted from third-party use—often lacking in legacy platforms.

The degree to which you can understand—or even question—how recommendations are made and which data points are used.

Even sophisticated algorithms can amplify human and systemic biases. As noted by Harvard Business Review (2024), “Organizations need to measure user preferences that take into account these biases, or risk re-enforcing them at scale.” This is why privacy-preserving, explainable AI is becoming a standard demanded by discerning viewers.

How to spot a truly personalized movie assistant (and avoid the fakes)

Red flags: Signs you’re still stuck with a generic algorithm

- Recommendations rarely change, regardless of feedback or new releases.

- You see the same “top picks” as everyone else, with no nod to your unique profile.

- Explanations for recommendations are vague or unavailable.

- The system doesn’t ask for, or respond to, nuanced feedback (ratings, moods, written reviews).

- Data privacy is an afterthought, with little transparency about how your information is used.

If you spot these warning signs, chances are you’re still trapped in the old algorithmic wasteland.

Far from solving your movie discovery dilemma, these platforms keep you circling the same shallow pool of options, while your real taste lies unexplored.

Priority checklist: Evaluating platforms like tasteray.com

- Personalization depth: Does the assistant build a nuanced profile from both your explicit and implicit preferences?

- Cultural relevance: Are recommendations responsive to real-time trends, not just historical data?

- Feedback integration: Can you tweak, challenge, or refine suggestions easily?

- Transparency: Are you told why a particular pick appears?

- Privacy and data protection: Is your information secured, anonymized, and never sold?

- Discovery diversity: Does the platform introduce you to films outside your usual genres?

- User control: Can you override the system’s assumptions and explore on your own terms?

If a platform, such as tasteray.com, ticks these boxes, you’re likely in good hands. Platforms that fall short are best left in the digital dustbin.

The questions you should be asking (but probably aren’t)

How many of the following do you know about your current platform?

- What data does it collect, and how is it used to make recommendations?

- Can you see or edit your taste profile?

- Does it adapt in real time, or just repackage old data?

- How does it ensure genre and cultural diversity?

- What steps does it take to combat algorithmic bias?

- Where can you go for transparent explanations or help?

If you’re drawing a blank, it’s time to demand better—or switch to a platform that puts your taste first.

Real-world impact: How better recommendations are changing lives (and culture)

From passive consumption to active discovery

When a movie assistant truly “gets” you, movie night is transformed. No more passively accepting whatever’s next in the queue. Instead, you’re an active participant, curating your own cinematic journey.

According to a 2024 industry survey, users who switched to an AI-powered assistant reported a 35% increase in satisfaction with their movie choices—and a 50% reduction in time spent searching. They also watched a more diverse range of films, leading to richer conversations and deeper cultural engagement.

In short, better recommendations don’t just improve leisure; they reshape how we relate to art, community, and ourselves.

Cultural ripple effects: What happens when taste is finally seen

| Cultural Impact | Evidence from Research | Notable Outcome |

|---|---|---|

| Greater cultural literacy | Users engage with international cinema (SpringerOpen, 2024) | Enriched discussions |

| Stronger social bonds | Shared discovery increases interaction (ScienceDirect, 2023) | More movie nights, less isolation |

| Increased artistic appreciation | Exposure to diverse genres (NCBI PMC, 2023) | Broader appreciation of film |

Table 5: Societal and cultural benefits of advanced movie recommendation systems.

“When people see their tastes reflected—and challenged—they engage more deeply, both with film and with each other. It’s a virtuous cycle of curiosity, connection, and culture.”

— Industry analyst, 2024

User voices: Stories from the front lines of movie curation

It’s not just the numbers. Listen to the users who’ve crossed over.

"Switching to a real movie assistant let me rediscover cinema as an adventure—not a chore. Now, I look forward to exploring, not just consuming."

— Jamie, movie lover, 2024

Their sentiments echo a growing movement: viewers are reclaiming agency, discovering overlooked gems, and forging new social bonds around shared discoveries.

The revolution isn’t an abstraction—it’s lived, one night and one film at a time.

What’s next? The future of movie recommendations and personal taste

Emerging trends in AI and cultural curation

The present is already radical, but the velocity of change in AI-driven movie curation is breathtaking. Privacy-preserving models and graph neural networks are now standard among leaders. The next frontier is context-awareness—algorithms that factor in your mood, the time of day, current events, even the weather.

Two current trends are especially worth watching:

- The integration of real-time social sentiment, tracking how films are received in different communities and adapting recommendations accordingly.

- The rise of user-controlled explainability, where you can interrogate—and even edit—the rationale behind each suggestion.

These changes are already reshaping what it means to have taste—and to have that taste respected by the machine.

The risks and ethical dilemmas ahead

But with progress comes peril. Even the most sophisticated systems have their shadows. Here’s what’s on the table:

-

Over-personalization can deepen filter bubbles and silo users from the broader cultural conversation.

-

Privacy remains a contested arena, with ongoing debates about who owns your data and how it’s protected.

-

Transparency is often more a marketing slogan than a reality.

-

Ethical frameworks are still catching up to the pace of technological change, especially regarding bias mitigation.

-

“Taste manipulation”—where platforms nudge users toward sponsored or partner content—threatens authenticity.

-

Unequal access to AI-driven curation could reinforce cultural divides, as not all platforms are created equal.

-

Users must remain vigilant, learning to spot the difference between genuine curation and algorithmic manipulation.

The path forward is as much about critical awareness as it is about technological innovation.

Why demanding more from your movie assistant matters

The stakes are higher than mere convenience. By insisting on better curation, you’re reclaiming control over your cultural life—and sending a message to the industry.

- Insist on transparency: Ask how and why decisions are made.

- Push for diversity: Reward platforms that introduce you to new genres, voices, and cultures.

- Make your feedback count: Don’t just scroll—rate, review, and reflect.

- Choose platforms that respect your privacy: Protect your data as fiercely as your taste.

- Stay curious: Embrace the unknown, and let the best assistants surprise you.

By taking these steps, you’re not just a passive consumer—you’re an active participant in the future of culture.

What you demand today sets the bar for what everyone else experiences tomorrow.

Your roadmap: Taking control of your movie discovery experience

Quick reference guide: Getting started with a personalized assistant

- Sign up for a new platform (like tasteray.com): Choose one that’s built for deep personalization, with transparent data policies.

- Complete your taste profile: Be honest and detailed—include favorite genres, moods, even pet peeves.

- Interact frequently: Rate movies, offer written feedback, and try suggestions outside your comfort zone.

- Review and refine: Regularly update your preferences and note how recommendations evolve.

- Engage with the community: Share picks, read others’ reviews, and join the cultural conversation.

Breaking out of the algorithmic wasteland doesn’t happen overnight. But with intention and the right assistant, your path to cinematic satisfaction is clear.

Checklist: Are you getting the most from your recommendations?

- Have you updated your taste profile in the last month?

- Are your recommendations changing as your interests change?

- Do you see a mix of familiar picks and bold suggestions?

- Is there a clear way to give granular feedback?

- Are you discovering at least one new genre or international title per month?

- Do you understand how your data is used?

If you answered “no” to any of the above, you deserve more from your movie curation experience.

Final word: The revolution starts with you

At the end of the day, better than generic movie recommendation algorithms isn’t a slogan—it’s the reality for those willing to demand it. The technology exists, the data is clear, and the platforms are evolving. The only missing ingredient is you—your curiosity, your feedback, your insistence on authentic cultural connection.

“In the end, the best movie assistant is the one that listens, learns, and challenges you to see the world anew—one film at a time.”

Don’t settle for the algorithmic wasteland. Make your taste matter. This is your revolution—claim it tonight.

Sources

References cited in this article

- ResearchGate(researchgate.net)

- SpringerOpen(jesit.springeropen.com)

- NCBI PMC(ncbi.nlm.nih.gov)

- Harvard Business Review(hbr.org)

- ScienceDirect(sciencedirect.com)

- ACM WebSci(dl.acm.org)

- Medium Case Study(medium.com)

- LinkedIn(linkedin.com)

- Arxiv(arxiv.org)

- Muvi(muvi.com)

- IEEE Xplore(ieeexplore.ieee.org)

- ITEGAM-JETIA(itegam-jetia.org)

- Springer(link.springer.com)

- Canada Media Fund(cmf-fmc.ca)

- ACM(dl.acm.org)

- ScienceDirect(sciencedirect.com)

- The Foodie Diary(thefoodiediary.com)

- Aromatech(aromatechgroup.com)

- Tastewise(tastewise.io)

- Wiley(asistdl.onlinelibrary.wiley.com)

- APA(psycnet.apa.org)

- ACM(dl.acm.org)

- AI Models(aimodels.fyi)

- Arxiv(arxiv.org)

- Shaped Blog(shaped.ai)

- ACM Web Conference 2024(dl.acm.org)

- PromptLayer(promptlayer.com)

- ResearchGate(researchgate.net)

- Litslink(litslink.com)

- IMD(imd.org)

- Hotel eMarketer(hotelemarketer.com)

- Creati.ai(creati.ai)

- Stratoflow(stratoflow.com)

- IJFMR(ijfmr.com)

- Bazaarvoice(bazaarvoice.com)

- Nature(nature.com)

- Tandfonline(tandfonline.com)

- MIT Sloan Management Review(sloanreview.mit.edu)

- SSRN(papers.ssrn.com)

- NHSJS(nhsjs.com)

- ScienceDirect(sciencedirect.com)

- Search Engine Journal(searchenginejournal.com)

Frustrated by bland picks? TasteRay finally gets it.

Platforms churn popularity over nuance - TasteRay decodes your unique movie taste beyond shallow trendy picks.

More Articles

Discover more topics from Personalized movie assistant

31 Best True Story Movies That Will Change How You See Reality

Best true story movies for 2026—discover 31 game-changing films, untold narratives, and bold truths that challenge your reality. Unlock your watchlist now.

Best Superhero Movies Ranked by What They Actually Change

Best superhero movies decoded: Discover 27 game-changing films that redefine the genre, debunk myths, and reveal what to watch next. Dive in before everyone else does.

Best Romantic Movies That Actually Change How You See Love

Discover the definitive, edgy guide to love on film—unexpected picks, expert insights, and raw truths. Find your next obsession now.

Best Personalized Movie Recommendation Websites That Outsmart the Algorithm

Best personalized movie recommendation websites—ditch generic picks for tailored, AI-powered suggestions. Discover 9 edgy platforms that know your taste. Start watching smarter.

Best Personalized Movie Recommendation Service or Culture Trap?

Best personalized movie recommendation service reveals hidden truths, AI hacks, and expert tips—ditch endless scrolling and unlock cinematic discoveries now.

Best Personalized Movie Assistant or Taste Trap? Choosing AI Wisely

Discover how AI-powered platforms like tasteray.com are rewriting movie discovery in 2026. Stop scrolling, start watching.

Best Personalized Film Recommendation Service That Won’t Sell You

Best personalized film recommendation service exposes 2026’s hidden truths and real solutions. Get smarter, safer picks now—your next movie night depends on it.

Best New Movies 2023 That Actually Mattered (and Why)

Discover the 21 most talked-about, controversial, and culture-defining films. Unfiltered, expert analysis and hidden gems await—start your watchlist now.

Best Movies to Stream This Weekend When the Algorithm Fails

Discover insights about best movies to stream this weekend

The Best Movies on Netflix in 2026 If You’re Done with the Algorithm

Best movies on Netflix, curated with edge: discover 27 films that break the algorithm, challenge your taste, and make your next movie night legendary. Start watching smarter.

Best Movies on Hulu in 2026 for People Who Hate the Algorithm

You scroll. You swipe. You lose another hour to the void, paralyzed by the infinite “best movies on Hulu” lists, each promising salvation from your streaming

Best Movies on Disney Plus for People Who’ve Seen It All

Uncover 2026’s most daring, overlooked, and must-watch films. Escape the algorithm—find your next obsession today.